53–56, 2020.A sequence input layer inputs sequence data to a neuralĪn LSTM layer is an RNN layer that learns long-termĭependencies between time steps in time series and sequence data.Īn LSTM projected layer is an RNN layer that learns long-termĭependencies between time steps in time series and sequence data using projected learnableĪ bidirectional LSTM (BiLSTM) layer is an RNN layer that learnsīidirectional long-term dependencies between time steps of time series or sequence data. In: 2020 IEEE 63rd International Midwest Symposium on Circuits and Systems (MWSCAS), Springfield, MA, USA, pp. A high-frequency small-signal model for four-port network MOSFETs. Roman-Loera A, Veerabathini A, Flores-Oropeza LA, Ramirez-Angulo J. Nicholas gerard timmons, andrew rice, approximating activation functions. In: 2007 14th International Conference on Mixed Design of Integrated Circuits and Systems, Ciechocinek, Poland, pp. Tradeoffs and optimization in analog CMOS design. IEEE Trans Comput Aided Des Integr Circuits Syst. An efficient and reliable approach for semiconductor device parameter extraction. In: IEEE Transactions on Circuits and Systems II: Analog and Digital Signal Processing, vol. CMOS transconductance multipliers: a tutorial. CMOS analogue neurone circuit with programmable activation functions utilising MOS transistors with optimised process/device parameters. Small-signal MOSFET models for analog circuit design. Analog Multipliers, Datasheet: MT-079 Tutorial,, Available: īehzad R. A four-transistor four-quadrant analog multiplier using MOS transistors operating in the saturation region. Approximation of functions on a compact set by finite sums of a sigmoid function without scaling.

In: 2016 IEEE Biomedical Circuits and Systems Conference (BioCAS), Shanghai, China, pp. Stochastic implementation of the activation function for artificial neural networks. Neural circuit inference from function to structure. Fast and slow contrast adaptation in retinal circuitry. In: Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, pp. Synaptic electronics: materials, devices and applications. Deep neural network optimized to resistive memory with nonlinear current–voltage characteristics. Hyungjun K, Taesu K, Jinseok K, Jae-Joon K. In: IEEE International Solid–State Circuits Conference-(ISSCC), San Francisco, CA, pp. An N40 256K×44 embedded RRAM macro with SL-precharge SA and low-voltage current limiter to improve read and write performance. In: IEEE Custom Integrated Circuits Conference (CICC), Boston, MA, USA, pp. Compute-in-memory with emerging non-volatile memories: challenges and prospects. Neuromorphic computing based on emerging memory technologies. Neuro-inspired computing with emerging nonvolatile memory. Neuromorphic computing using non-volatile memory. International Roadmap for Devices and Systems, “Beyond CMOS”, IEEE, 2020 Available: īurr GW, Shelby RM, Sebastian A, Kim S, Kim S, Sidler S, Virwani K, Ishii M, Narayanan P, Fumarola A, Sanches LL, Boybat I, Le Gallo M, Moon K, Woo J, Hwang H, Leblebici Y.

Compact modeling of RRAM devices and its applications in 1T1R and 1S1R array design. (eds) Machine vision and augmented intelligence-theory and applications. NVM device-based deep inference architecture using self-gated activation functions (Swish).

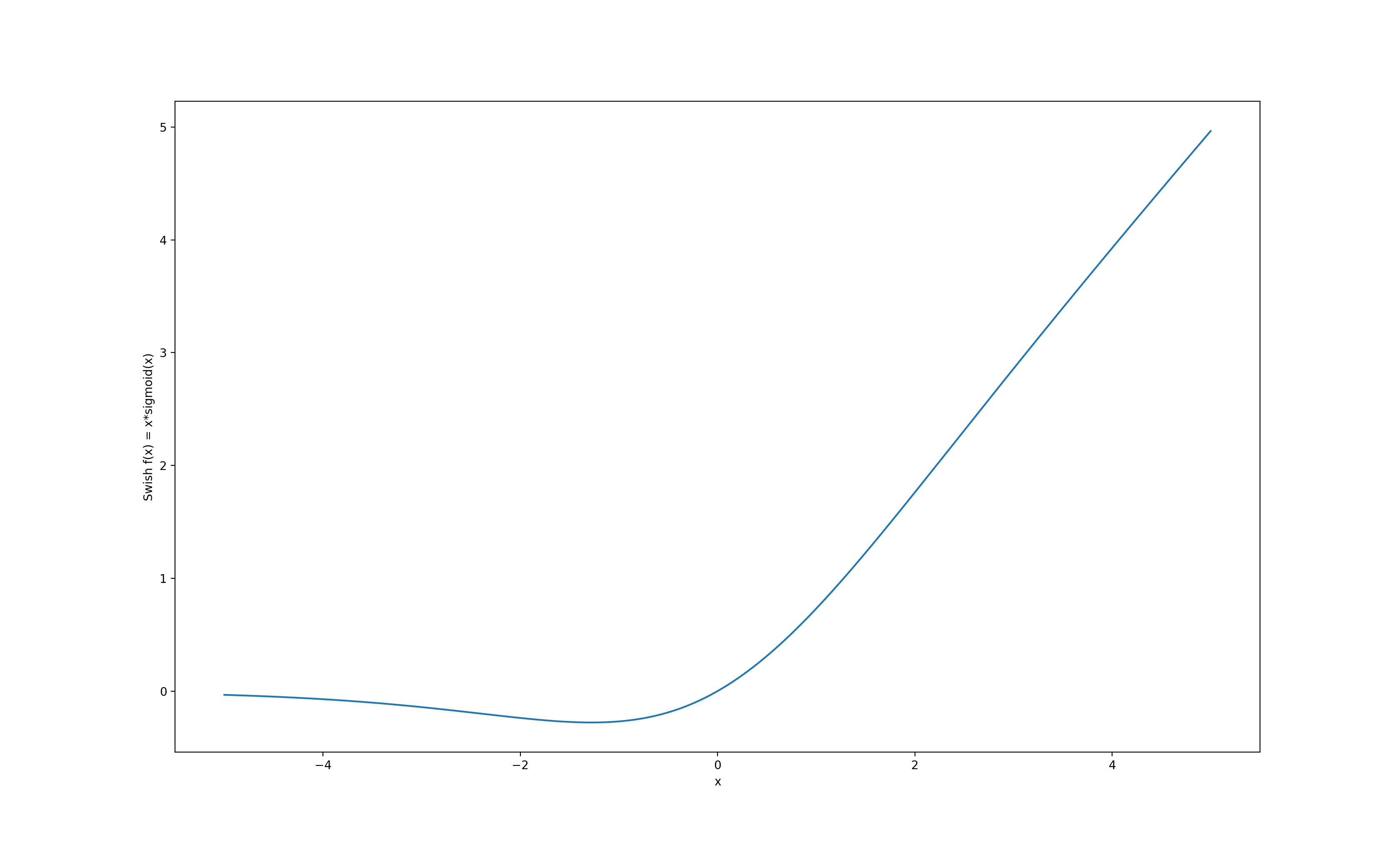

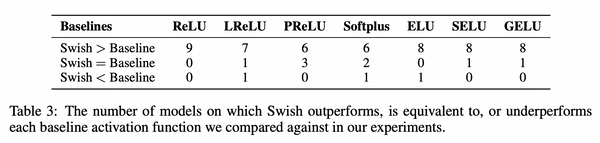

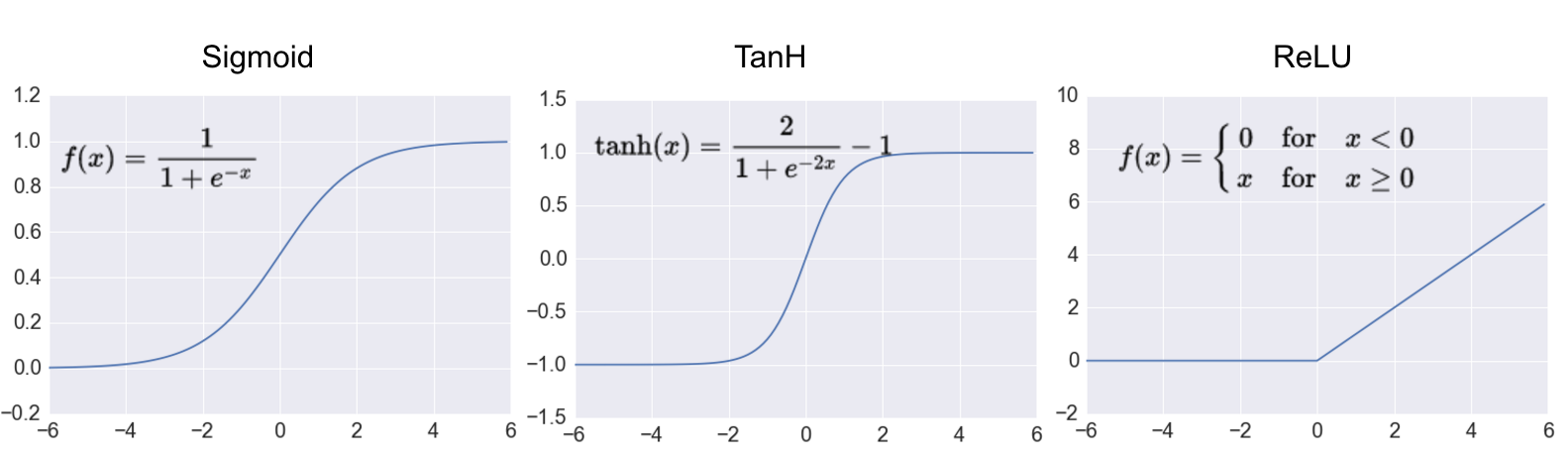

Programmable analogue VLSI implementation for asymmetric sigmoid neural activation function and its derivative. The area occupied by the swish circuit is 15.12 μm 2. The simulation results show low power of 498 μW attained for the swish circuit. The analog hardware design of the swish circuit has been implemented in UMC 180 nm technology node. The periodic analysis of the swish equivalent model has been performed on the parameters, such as power, area and total harmonic distortion, at different temperature ranges. The approximate analog equivalent model of swish circuit helps capture the incremental changes in the output voltage or current. This paper proposes a novel hardware design of swish (self-gated) activation function for analog applications using Resistive Random Access Memory (RRAM). In software, variety of activation functions, such as sigmoid, TanH, ReLU, ELU, GeLU, SELU, SiLU/Swish, etc., have been investigated for machine learning applications, whereas the hardware implementation of the activation functions is sub-ordinate in literature. They help decide the output and its functionality for a given application. Activation functions are one of the key parameters in deep neural networks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed